10 Fun Real time AWS KickStart Projects with Documentation

Fun Real-time Projects to Learn AWS Kickstart Your Cloud Career

There’s no denying the transformative power of hands-on learning. And when it comes to mastering Amazon Web Services ( AWS ), building real-world applications is the key. Dive into the exciting realm of AWS with these 10 fun projects, each designed to enhance your cloud skills and propel your career forward. Embrace the “learn as you build” philosophy and discover the joy of creating practical solutions using AWS services.

Accelerate Your Career with AWS Below Projects

1. Launch a Static Website on Amazon S3

Prerequisites:

- Static website made up of HTML, CSS, JavaScript, etc. files.

Services Used:

- Amazon S3

- Amazon CloudFront

- Amazon Route 53

- AWS Certificate Manager

Get started with this cost-effective project that introduces you to core services like Amazon S3 and Amazon CloudFront. Migrate your static website to Amazon S3, create a CloudFront distribution, and manage your domain with Route 53. Secure it all with a valid SSL/TLS certificate using AWS Certificate Manager.

2. Use CloudFormation to Launch an Amazon EC2 Web Server

Prerequisites:

- PuTTY or SSH client

Services Used:

- Amazon CloudFormation

- Amazon EC2

- Amazon VPC (and subcomponents)

Efficiently deploy resources at scale using Infrastructure-as-Code (IaC) with CloudFormation. Explore writing a CloudFormation template to set up a web server on an Amazon EC2 instance. Ideal for managing multiple environments (development, test, and production).

3. Add a CI/CD Pipeline to an Amazon S3 Bucket

Prerequisites:

- Static website

- Static website code checked into GitHub

Services Used:

- Amazon S3

- AWS CodePipeline

- AWS CodeStar

Automate your software delivery pipeline with continuous integration and continuous delivery (CI/CD). Deploy website changes to production automatically upon code check-in. Utilize the S3 bucket from the previous project as a starting point.

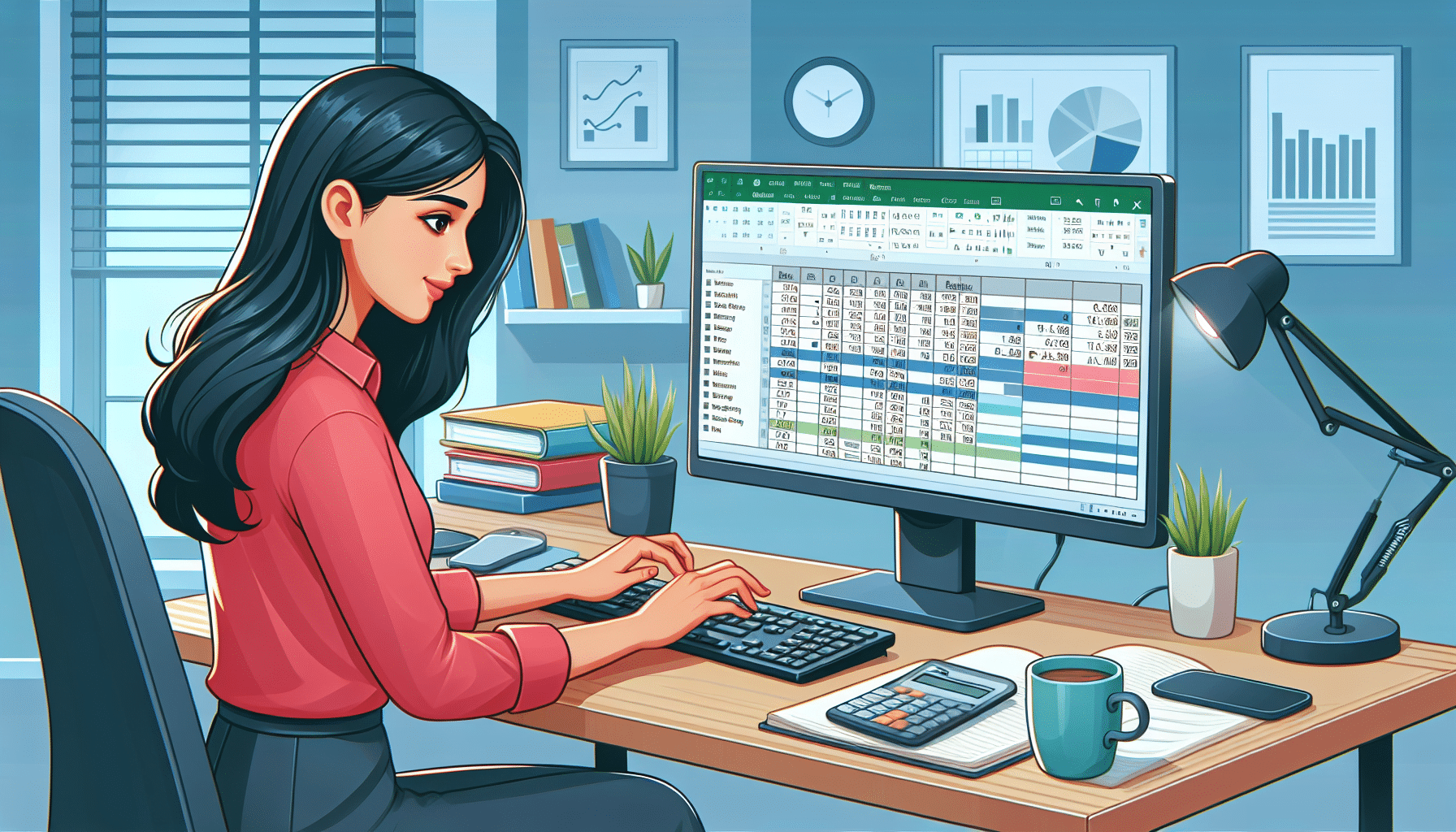

4. Publish Amazon CloudWatch Metrics to a CSV File using AWS Lambda

Prerequisites:

- AWS CLI installed on your local machine

- Text editor

Services Used:

- Amazon CloudWatch

- AWS Lambda

- AWS CLI

Integrate CloudWatch metrics into a CSV file using AWS Lambda. This project, suitable for those with some coding experience, provides hands-on experience with Lambda, a powerful AWS service.

5. Train and Deploy a Machine Learning Model using Amazon SageMaker

Prerequisites:

- Dataset used for training

- Familiarity with Python

Services Used:

- Amazon SageMaker

Delve into machine learning with Amazon SageMaker. Train a machine learning model to predict consumer behavior using Python. This project caters to those curious about machine learning and offers a guided approach.

Virtualization 90 Minute Demonstration Crash Free udemy Cour

the secrets of virtualization in just 90 minutes with comprehensive course! practical skills real-world applications for VMware vSphere, Microsoft Hyper-V, AWS.

6. Create a Chatbot that Translates Languages using Amazon Translate and Amazon Lex

Prerequisites:

- Dataset used for training

- Familiarity with Python

Services Used:

- Amazon Lex

- Amazon Translate

- AWS Lambda

- AWS CloudFormation

- Amazon CloudFront

- Amazon Cognito

Build a conversational interface for language translation using Amazon Lex and Amazon Translate. This project introduces you to AI services, AWS Lambda, CloudFormation, CloudFront, and more.

7. Deploy a Simple React Web Application using AWS Amplify

Prerequisites:

- Node.js

- GitHub / Git

- Text editor

Services Used:

- AWS Amplify

- Amazon Cognito

- Amazon DynamoDB

- AWS AppSync

- Amazon S3

- Amazon CloudFront

Experience the power of AWS Amplify by deploying a React application with DynamoDB, Cognito, AppSync, S3, and CloudFront. This project, completed in under 50 minutes, demonstrates the efficiency of full-stack web application development on AWS.

8. Create an Alexa Skill that Provides Study Tips using AWS Lambda and DynamoDB

Prerequisites:

- An account on the Amazon Developer Portal

- DynamoDB table populated with study tips (optional: Echo device)

Services Used:

- Alexa Skills Kit (ASK)

- AWS Lambda

- Amazon DynamoDB

Dive into Alexa skill development by building an Alexa skill backed by AWS Lambda and DynamoDB. This project serves up helpful study tips, making it a gentle introduction to both cloud and AI.

9. Recognize Celebrities using Amazon Rekognition, AWS Lambda, and Amazon S3

Prerequisites:

- Images of celebrities

Services Used:

- Amazon S3

- AWS Lambda

- Amazon Rekognition

Explore the fun side of Amazon Rekognition by triggering a Lambda function when an image is uploaded to an S3 bucket. Identify celebrities in photos using the RecognizeCelebrities API.

10. Host a Dedicated Jenkins Server on Amazon EC2

Prerequisites:

- EC2 key pair

- SSH client or PuTTY

Services Used:

- Amazon EC2

- Amazon VPC (and subcomponents)

Spin up an EC2 instance and configure Jenkins, exposing you to EC2 and security considerations. This hands-on project lets you explore the world of Jenkins and further enhances your understanding of AWS services.

Next Steps

Embark on your AWS learning journey with these exciting projects. As you navigate each tutorial, you’ll gain valuable skills that can significantly boost your cloud career. Whether you’re a beginner or looking to expand your AWS expertise, these hands-on experiences offer a practical and enjoyable way to learn.